OpenAI Town Hall with Sam Altman

In the relentless flood of AI news, it’s easy to get lost in the hype. Every day brings a new model, a new demo, or a new dire prediction. Cutting through the noise to find genuinely meaningful insights about where we are heading is a constant challenge. That’s why when a central figure like OpenAI’s CEO, Sam Altman, holds a candid town hall with builders and developers, it’s worth paying close attention.

This wasn’t a polished keynote or a carefully managed press release. It was a direct conversation offering a raw look into the thinking that is shaping our technological future. The discussion moved beyond simple capabilities to address the complex, second-order effects of truly powerful AI—on our jobs, our creativity, our businesses, and even our safety.

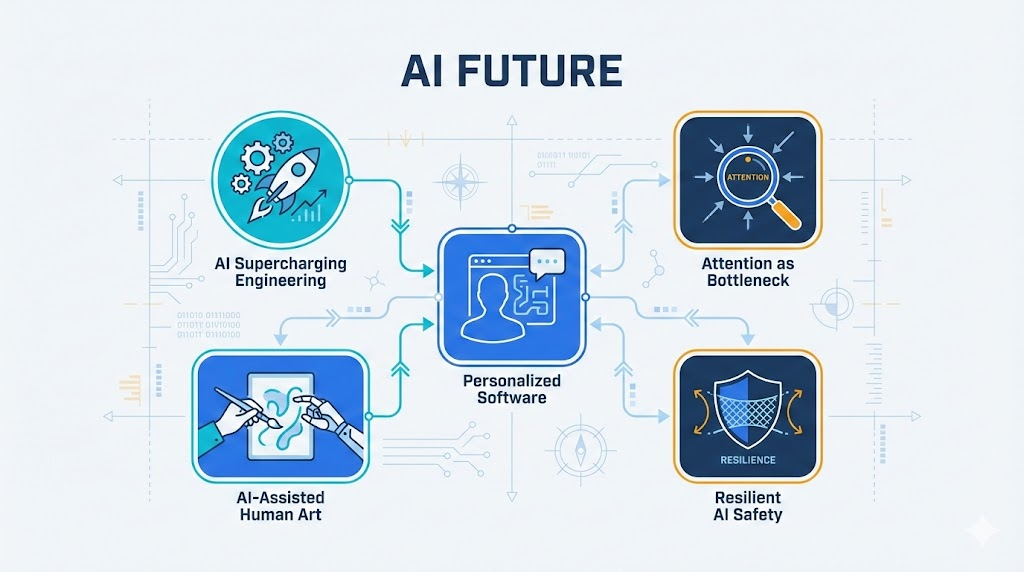

This post distills the five most impactful and counter-intuitive takeaways from that conversation. These are the insights that reframe common fears, challenge baked-in assumptions, and provide a clearer map for anyone building, creating, or simply trying to navigate our AI-powered world.

AI Won’t Kill Software Engineering — It Will Supercharge It

A common fear is that AI, by making code generation nearly free, will eliminate the need for software engineers. Sam Altman argues the exact opposite is true, citing a version of the Jevans paradox: as a resource becomes cheaper, demand for it dramatically increases. As AI makes creating software faster and less expensive, the demand for highly customized, bespoke software will explode. The reason for this explosion is a fundamental shift in how we will use computers, with a move towards software made for an audience of one.

This is a crucial reframing of the narrative from job displacement to job transformation. For builders, the strategic implication is clear: the most valuable engineering skill is shifting from the mechanical act of writing code to the architectural act of directing AI to solve problems. It will involve less time typing and debugging and more time orchestrating systems, a change that will empower far more people to create value. This explosion in creation, however, creates a new and even more pressing problem.

I think what it means to be an engineer is going to super change… the demand for software seems to not be slowing down at all and my guess on the future is a lot of us use software that was literally written for one person or a very small number of people and we’re constantly customizing our own software.

Building Is Easy. Getting Attention Is the Real Bottleneck.

Drawing from deep experience, Altman notes, “before this I used to run Y Combinator and… the consistent thing you’d hear from startup founders is I thought the hard part of this was going to be building a product and the hard part is getting anyone to care or to use it.” With AI, the act of creation has become radically easier, which has only intensified the true, perennial challenge: Go-To-Market (GTM).

This insight contains a critical layer of complexity for entrepreneurs. Not only is it easier for everyone to build, but builders are also using AI to “automate sales [and] automate marketing,” creating an arms race for the ultimate scarce commodity: human attention. In a world of AI-driven abundance, the strategic imperative for any founder is that a technical moat is not enough. The most durable advantage lies in mastering distribution and building a brand that can capture attention in an increasingly noisy, AI-saturated landscape.

…the consistent thing you’d hear from startup founders is I thought the hard part of this was going to be building a product and the hard part is getting anyone to care or to use it… human attention remains like this very limited thing and so you’re always going to be competing with other people…

The Future of Software Is Personal and Written “Just for You”

Our fundamental relationship with software is about to change. We are moving away from the era of static, one-size-fits-all applications. Altman envisions a future where our tools are not fixed but are constantly evolving and being rewritten in real-time to suit our individual needs, quirks, and workflows.

The implication for product builders is profound. Instead of being passive users who adapt to a rigid application, we will become active directors of software that adapts to us. This means the new frontier of innovation lies in creating systems that learn, personalize, and evolve with the user, rather than simply shipping fixed features. The winning products will be those that feel less like a tool and more like a personalized extension of the user’s own mind.

I no longer think of software as this static thing if I have a little problem I expect the computer to like write some code right away and get it solved for me… that idea that our kind of tools are constantly evolving and converging just for us that seems like it’s going to happen…

We Don’t Want AI Art, We Want AI-Assisted Human Art

A fascinating phenomenon has emerged from AI image generation: people have a dramatically lower appreciation for art they believe was made entirely by an AI, even when blind tests show they prefer its aesthetic. When they discover a piece they admire is machine-made, their satisfaction plummets.

The deeper meaning here is that we aren’t just drawn to an artifact; we are drawn to the human story and connection behind it. “When I finish reading a book that I love,” Altman explains, “the first thing I want to do is like look up the author and understand their life… because I felt this connection to this person.” An AI-generated novel, no matter how brilliant, leaves us “sad and crestfallen.” Crucially, this negative reaction dissipates if the art is even “a little bit human directed.” For creators, the lesson is that their role as a director, curator, and storyteller is more valuable than ever. In a world of infinite content, the human brand behind the work is the ultimate source of meaning.

when I finish reading a book that I love the first thing I want to do is like look up the author and understand their life… I think if I read a great novel and at the end I learned it was written by an AI would sort of be kind of sad and crestfallen.

AI Safety Is Shifting from “Blocking” to “Resilience”

When confronting major AI risks, Altman’s tone becomes one of grave concern. “I am very nervous about where things are,” he states, identifying biosecurity as a “reasonable bet” for what could go “really wrong… this year.” He argues that the world’s current strategy of blocking access and preventing misuse is a failing long-term approach.

Instead, he proposes a mental model shift based on a historical parallel: fire safety. Fire was a revolutionary tool that also burned down cities. Society’s solution wasn’t to ban fire but to develop resilience to it through fire codes, flame-resistant materials, and sprinkler systems. For AI, this means the strategic focus must shift from a brittle strategy of prevention to a robust one of resilience—building the societal and technological infrastructure to withstand and recover from failures when they inevitably occur.

…the shift that I think the world needs to make for AI security generally… is to move from one of like blocking to one of resilience… we came up with fire code and flame resistant materials and a bunch of other things… and now we’re pretty good at that as a society. I think we need to think about AI the same way.

Conclusion: Navigating the New World

Taken together, Altman’s insights paint a cohesive picture of the new value chain. As AI supercharges engineering (Takeaway 1) to create a world of hyper-personal software (Takeaway 3), the primary economic bottleneck shifts from the act of creation to the finite resource of human attention (Takeaway 2). This scarcity of attention, in turn, elevates the value of authentic human creativity and connection (Takeaway 4). Supporting this entire ecosystem requires a new foundation of societal resilience, not just prevention, to manage the profound risks (Takeaway 5).

This framework provides a map for navigating the complex future ahead. It suggests that as AI automates technical execution, our greatest value shifts to less tangible skills. This leads to the essential question we must all now consider: how do we systematically cultivate the high agency, resilience, and adaptability needed to thrive in a world of constant, AI-driven change?

Sources: